Daily Brief: The U.S. Chip Industry, Logistic Curves & The Death of AI Scaling Laws

Beware of the ceiling at the end of the exponential curve.

Summary of this Daily Brief: (1) It’s good to be back after a few sick days (2) the US chip industry is monopolistic (3) Viral Outbreaks, Moore’s Law, AI Scaling Laws, and Business Engine growth, all have one thing in common: Logistic Curves (or S-curves).

Read on.

I’m back, and here’s the plan:

This weekend I was preparing to send out the newsletter on the US Chip industry, but then I contracted a respiratory virus.

Once I was born anew yesterday, I found myself in a completely different state of mind: I was much less enthused about pushing out some dry market analysis on the US-based semiconductors.

This was partially because,

The market is pretty monopolistic, and thus somewhat uninteresting,

I felt like I was writing about a business ecosystem for which the editorial landscape is oversaturated,

I wasn’t saying anything new or particularly insightful - I was just reporting the news.

So rather than go too deep into the landscape of chips, I’ll start this Daily Brief with a bird’s eye view of what I learned about US based chip companies in the process of researching that newsletter — THEN we’ll dive into what I actually care about:

The exponential growth seen in AI scaling laws and silicon chip development needs to be complemented by a healthy conversation about logistic curves.

With that, let’s get at it.

US Chips in Brief

The market for integrated circuits (silicon-based chips) have 3 major verticals in the US:

Data Centers (Web Servers, Cloud Computing, AI infrastructure)

Personal Computers (Desktops, Laptops, Gaming)

Phones (iPhone + Androids)

Of all these major markets, there are 3 types of logic chips (the integrated circuits that do all the data processing and computing) :

CPUs (Central Processing Units) these are used in Web Servers, Cloud Computing, Personal Computers, etc.

GPUs (Graphics Processing Units) these are used in AI Data Centers for machine learning, and video graphics for gaming.

SoCs (System-on-chips) - energy efficient universal computers (from processing, to memory, to radio) for smart phones.

There are 3 major players in CPUs and GPUs, and 2 major players for SoCs:

CPUs (Intel 70%, AMD 30%) - excluding Apple Macs

GPUs (Nvidia 88%, AMD 12%) - excluding Apple Macs

SoCs (Apple 55%, Qualcomm 45%)

A brief comment on Apple and SoCs:

Apple’s marketshare for SoCs is just determined by the marketshare of iPhones; whereas Qualcomm supplies essentially all SoCs (90+%) for Androids. Moreover, Apple has strong branding and a fully integrated software+hardware ecosystem — so while their chips are absolutely incredible, consumers are much more concerned with the iPhone’s user experience than anything else.

So long as iPhone customers are happy with the platform, Apples SoC position will remain strong — whereas Qualcomm competes (and essentially monopolizes) the US based SoC market for all Android phones.

The overall theme is that Apple vertically integrates its chips with their own consumer products (from iPhones to Macs) — leaving the rest of the industry divided between Nvidia, AMD, Intel (for processing) and Qualcomm (Androids).

In a Nutshell:

That is essentially what I call “Big Semi-Con”. To help you remember the breakdown, I made some memes.

Here’s Orpheus with Nvidia glasses (high fidelity graphics) presenting the options of AMD vs. Intel in the CPU Processing War:

And don’t forget the SoC Duel between Trinity and Neo (they’re friends, don’t worry):

With the major players identified, possibly more interesting is the breakdown of how these companies run their business. The US Chip industry essentially has 3 business structures:

Fabless — they design the chips, but outsource the manufacturing.

Foundry — they manufacture the chips, and sell their production capabilities to fabless designers.

IDM (Integrated Design Manufacturing) — A term coined by Intel that means they both design and manufacture chips (design + fab in-house).

Of the Big 5 companies that dominate the US chip business, only Intel is an IDM business. All others (Nvidia, AMD, Apple and Qualcomm), outsource their fabrication to TSMC, which surpassed Intel as the lead chip manufacturer in the 2000’s, and has consistently been the global industry leader for foundries.

At present, the standard pipeline for manufacturing chips in the US is:

Find clients looking for a specific application of computing hardware.

Design a chip that is specifically designed for that application.

Outsource fabrication to TSMC.

Get bought by one of the 5 players from Big Semi-Con.

This is a little tongue-in-cheek… but not far from the truth.

Let’s frame this in terms of a standard business/management consulting framework for growth — known as Build, Buy, Partner. From what I’ve seen, the US semi-con landscape follows a standard cycle:

Small fabless companies build (for some niche vertical)

Big fabless companies buy (talent and small companies)

They all partner with foundries (primarily TSMC)

and the cycle continues. In recent years, most growth for these major tech titans has been driven by M&A …

At least until November 30, 2022.

Moore’s Law meets AI Scaling Laws

As I mentioned in last week’s newsletter, the world of computing hardware changed dramatically when ChatGPT came online.

Given the hype cycle for LLMs and AI over the past two years, it’s hard to imagine but language models and neural networks have been around forever — and prior to November 2022, the consensus was that computing demands were expensive, and gains were marginal.

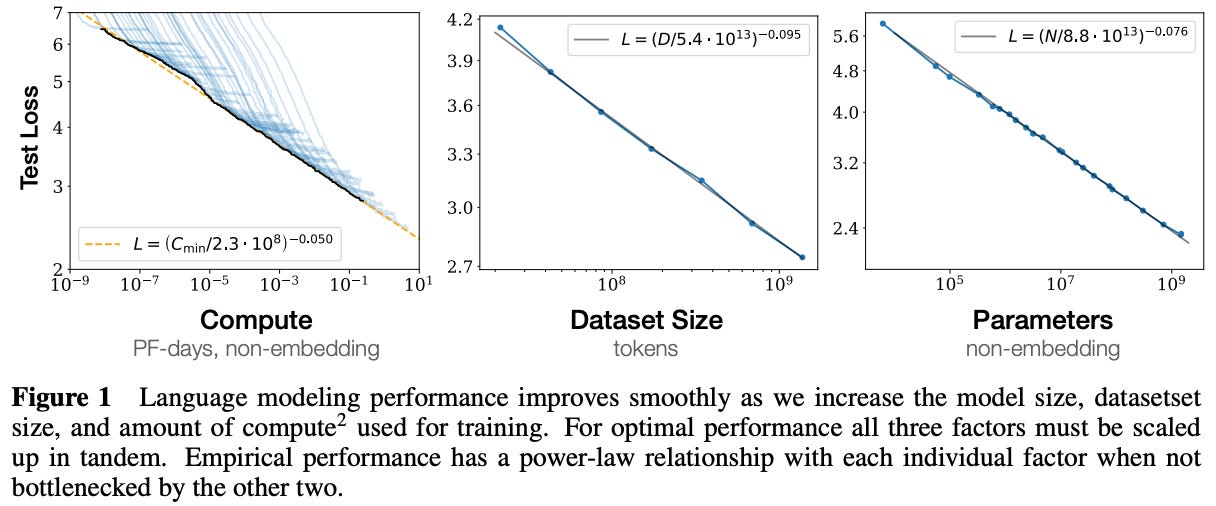

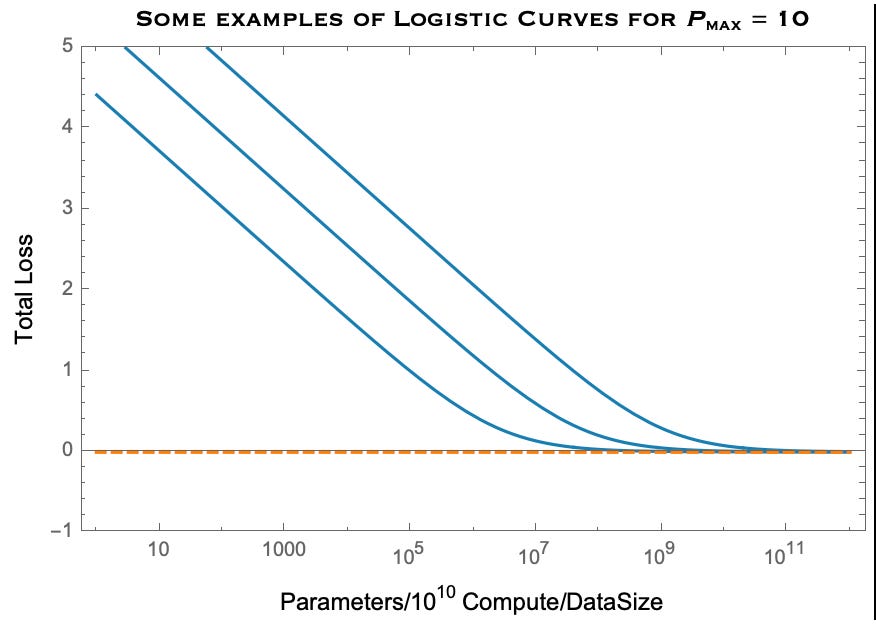

What really changed the landscape were (1) Transformer Networks, and (2) a paper published by OpenAI in 2020 that found the performance of Language Models increased dramatically (loss function plummeted) when one tuned the compute, training data, and model size — shown below:

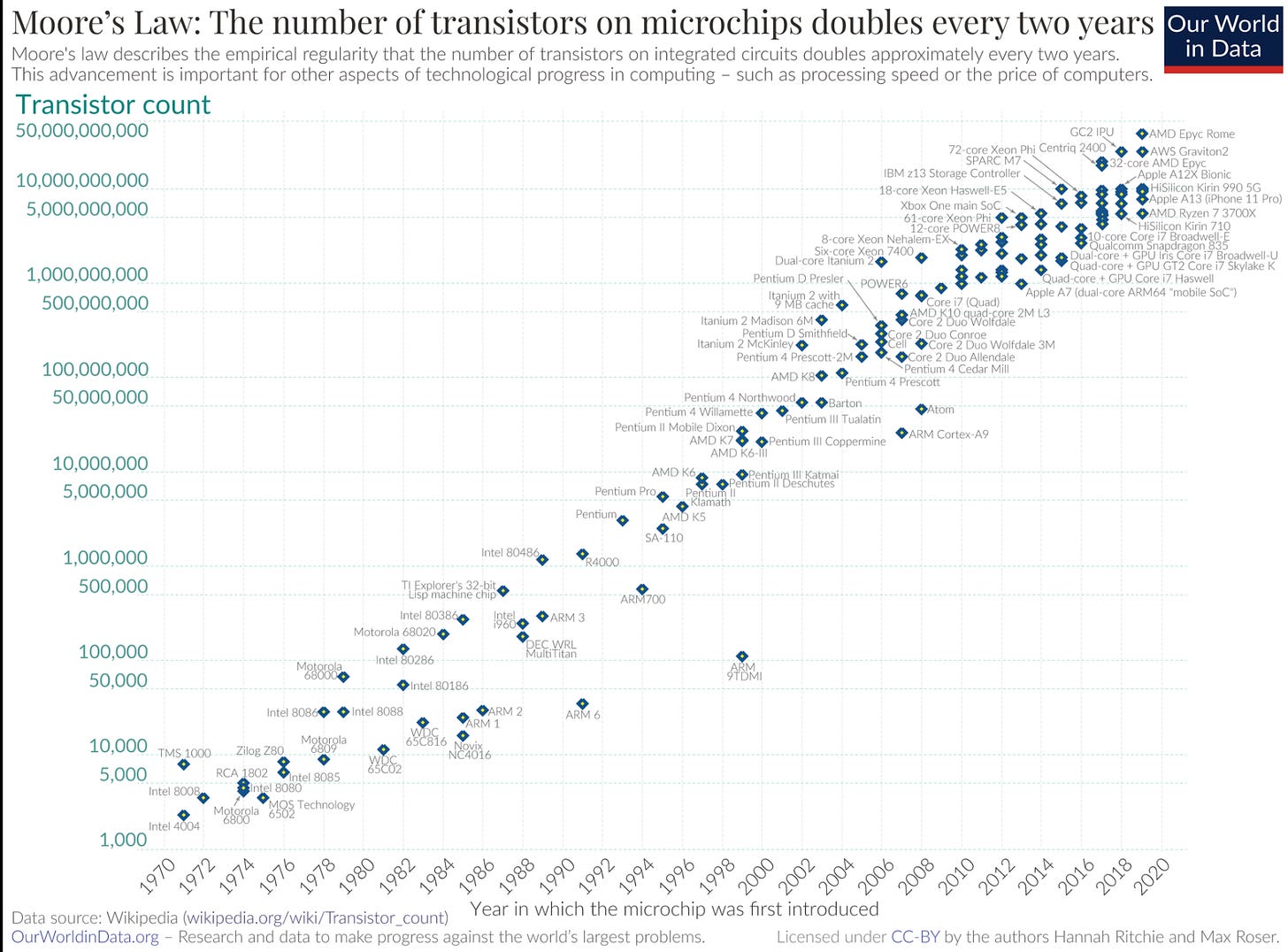

These trends are known informally as the AI Scaling Laws. The full open-access paper can be found on the arXiv [2001.08361]. Despite the underwhelming profitability of these models, everyone continues to point to Moore’s Law (shown below) as the reason one should expect costs to go down, and that the capabilities of language models should continue improving over time - ad infinitum.

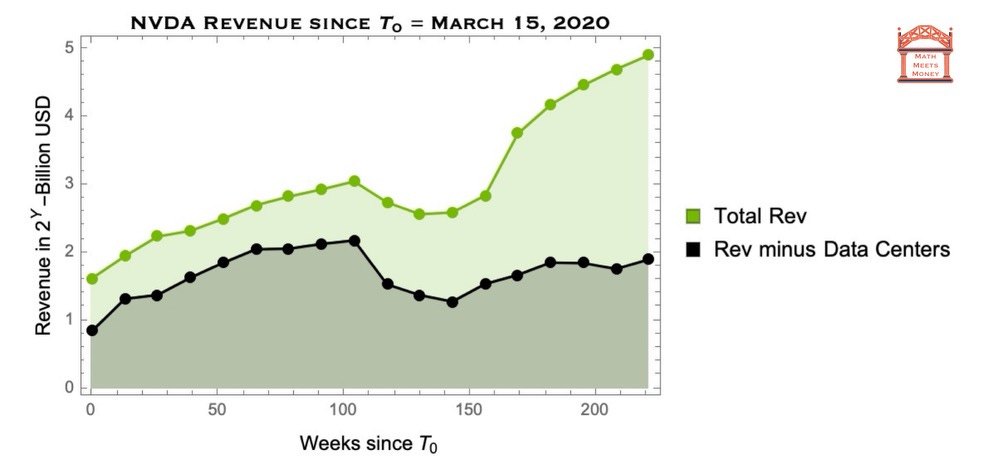

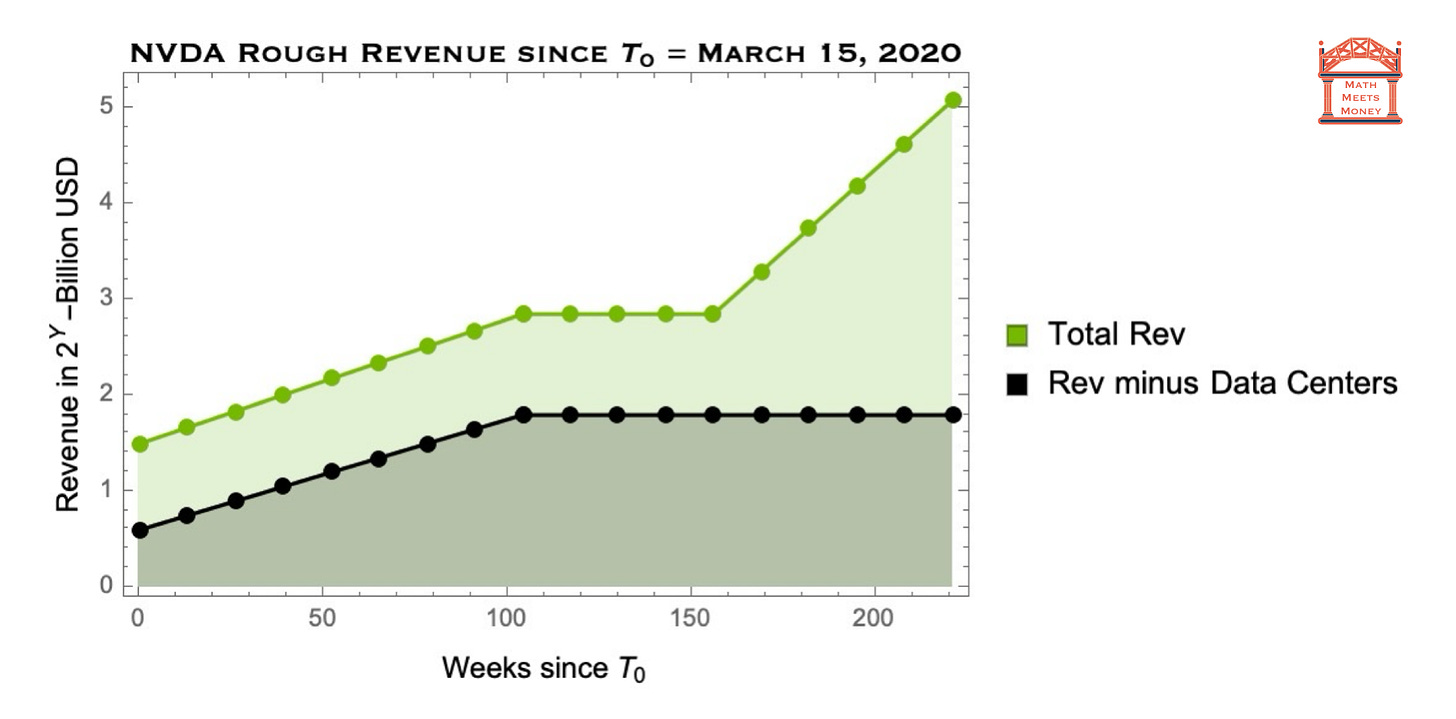

For this reason, after ChatGPT launched, Nvidia has seen atypical growth that departed from the standard paradigm for large chip companies (Buying + Partnering). With the commercial launch of LLM based chatbots, Nvidia discovered, and captured, a new addressable market (seen below):

Performing a regression on this data, we can see even more clearly what happened in the 155th week (November 2022) following March 15, 2020:

Note that the vertical scale is logarithmic. So in brief - the US chip industry was shaken up for the first time in decades due to a confluence of 4 factors:

Moore’s Law states that transistors per chip (with is a proxy for compute per USD) will double every two years.

Using Transformer networks, OpenAI found in 2020 that loss function had logarithmic scaling which depended on (1) number of model parameters, (2) size of data set, (3) compute in terms of floating point operations performed during the training process.

OpenAI then engineered a product that realized this insight, ChatGPT - this lead to the commercial launch of hundreds of other LLMs (which all need GPUs to train and run inferences).

So LLM Scaling Laws + Moore’s Law seems to imply infinite productivity gains (thanks to Transformers) with no end in sight — so everyone starts mining for gold, and Nvidia is selling shovels.

But the universe is cruel and there’s no free lunch. Indeed, one of the Math Meets Money subscribers pointed me to this newsletter pointing out the plateau in computer memory that happened long ago.

So let us sanity check “infinite productivity dream” of the AI boom.

Logistic Curves

Every undergrad that takes a course on Nonlinear Dynamics is first introduced to the subject with the following differential equation for population growth:

This simple model has some characteristic features:

When P « P_max, the population, P, grows exponentially

When P » P_max, the population, P, shrinks exponentially

When P approaches P_max, the carrying capacity, the population asymptotes to P —> P_max — i.e. growth stops.

Subject to a boundary/initial condition, this can be solved exactly:

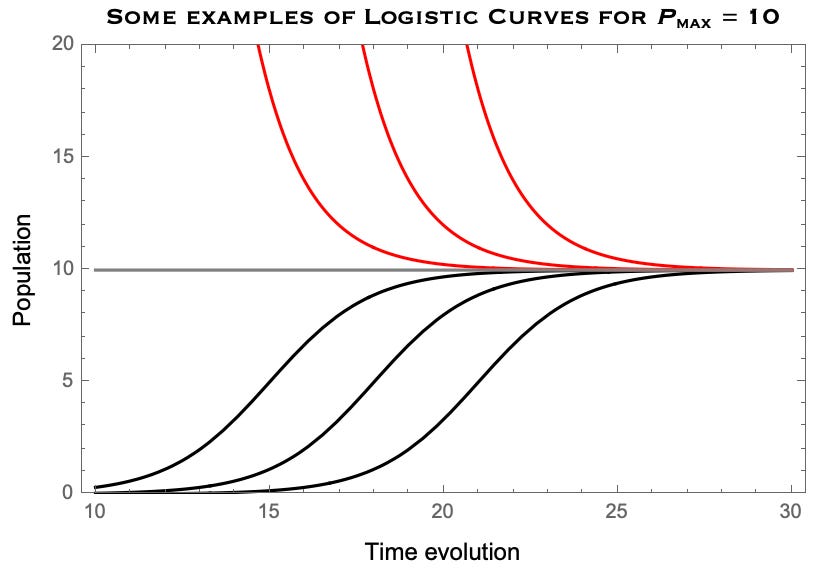

Above, k, tells you about the rate of doubling, P_max, gives the maximum value, and t_1/2, is a free parameter that by the time at which you will achieve half your market growth (or some other boundary condition). Below is a plot for some curves with k = 1, P_max = 10:

The red trajectories occur when the initial population/value is above the carrying capacity — whereas the black trajectories occur when the initial population/value is below the carrying capacity.

This type of growth appears everywhere in physical systems - both out there in the universe, and in the ecosystem of business engines. Indeed, one can think of the carrying capacity as the service obtainable market in a business context.

As such, this curve has a couple of commonly used names:

The Logistic Curve: The scientific term to describe a system that initially grows exponentially and then plateaus at some carrying capacity.

and likewise in a business context:

The S-curve: The business term that describes the exponential growth and inevitable plateau of a good or service in the company’s Serviceable Obtainable Market (SOM).

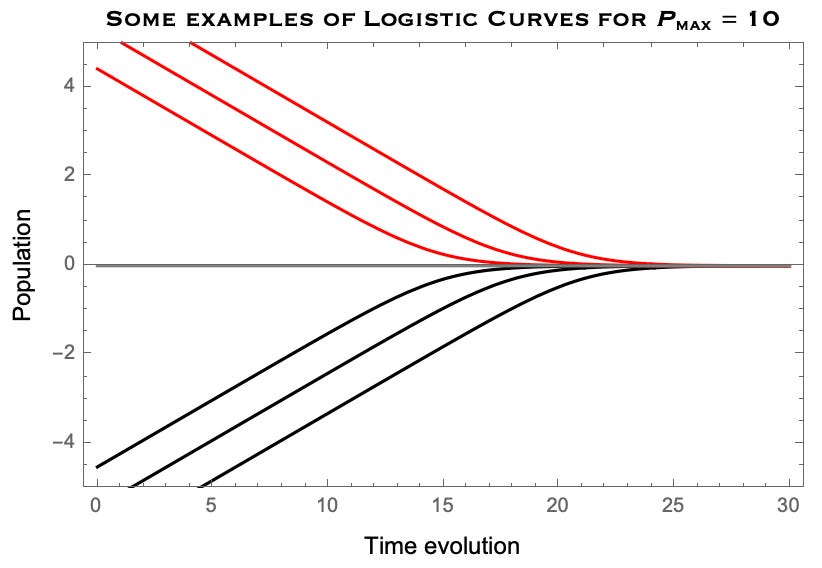

The linear scale above clearly shows why this is called an S-curve — but to find Moore’s Law and AI Scaling Laws (as shown in the real data plots at the beginning of the newsletter), it is best to see the same plot with a logarithmic scale:

where the zero-point serves as the carrying capacity of the system. Now you can start to get a sense of where AI scaling and Moore’s Law live:

The red trajectories resemble the loss function of untrained LLMs for AI Scaling Laws, where the initial value is above the carrying capacity of the data on which the model is being trained.

The black trajectories resemble the growth of transistors on a silicon chip for Moore’s Law, which started out well below the capacity, and will eventually converge on the physical limit based on silicon lithography.

The main takeaway is that whenever you see exponential growth (or decay), just remember that you are on the front-end of a logistic curve, quickly approaching the back-end of diminishing returns. Simply put:

Exponential growth (or decay) never goes on forever - and it can end quickly and unexpectedly unless you have a model for the carry population of the system.

Let’s see this in practice.

The Death of Moore’s Law

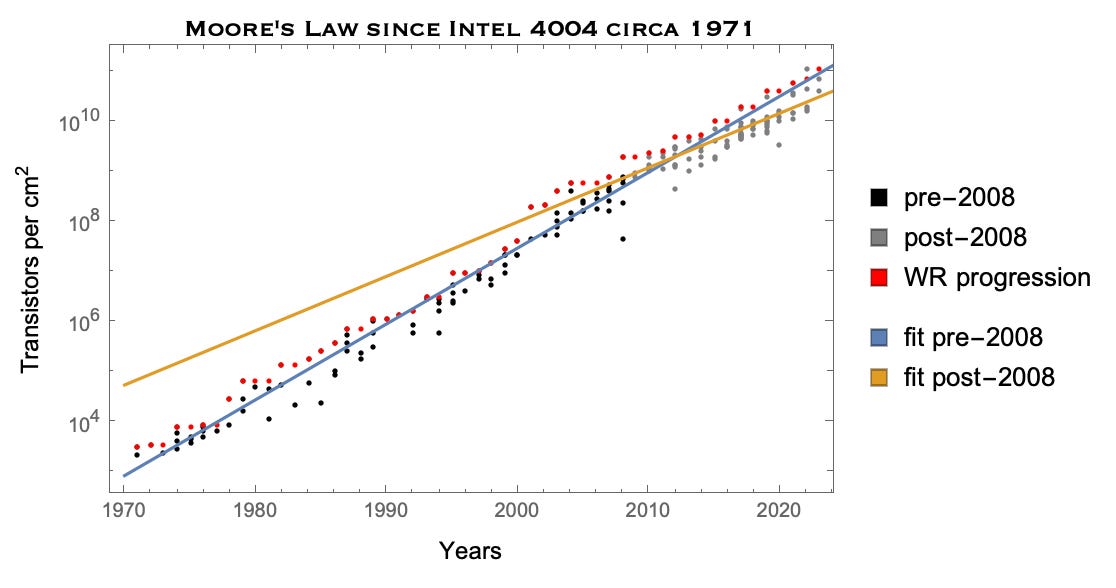

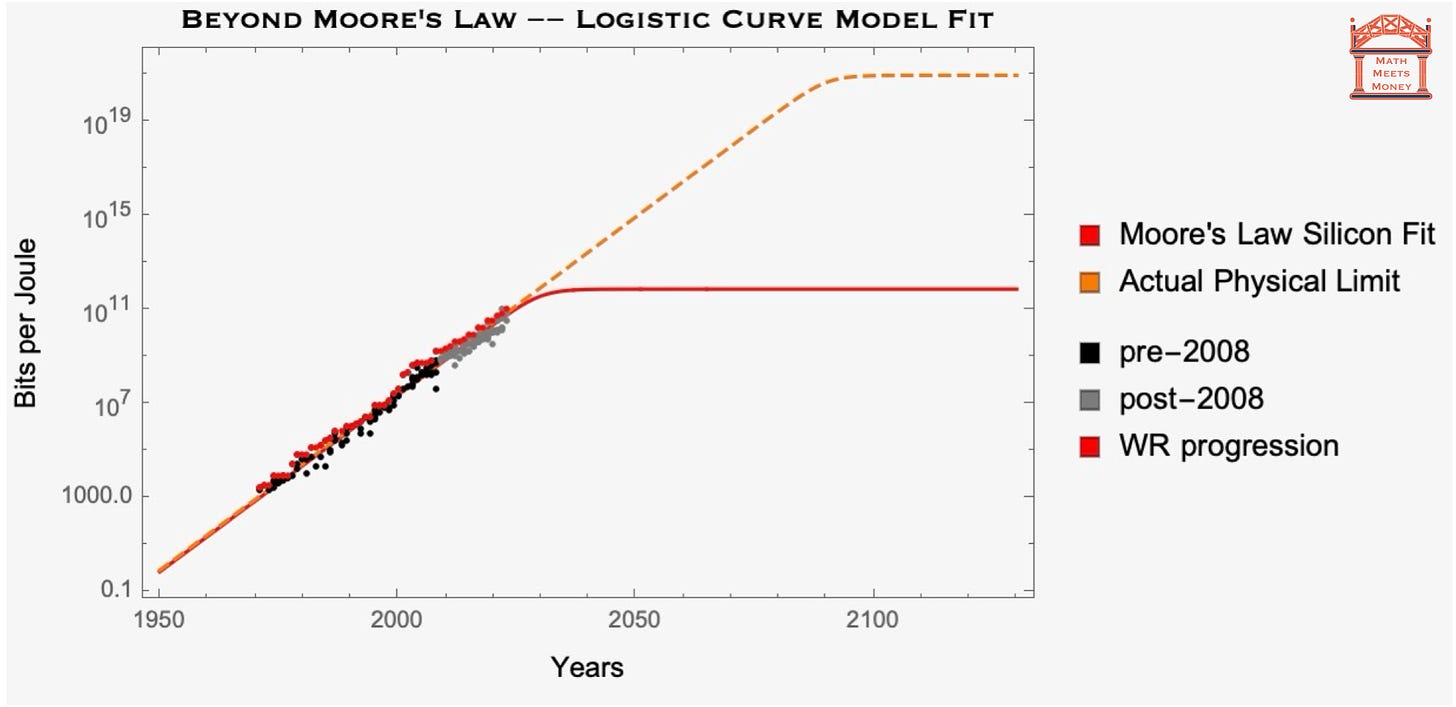

Let’s first look at Moore’s Law in a little more detail. Below I’ve plotted the historical data for CPU development (the graph is similar for GPUs, but only really takes off with video game development in the 1990s):

The black dots are CPUs pre-2008, grey is post-2008, and red is the world-record progression of transistors per square centimeter (sqcm).

Why the split between transistors before and after 2008?

Because as you can see, the growth rate starts to slow down at that point. Performing a linear regression, I found the following rates of doubling for transistors per sqcm:

In blue, pre-2008, doubles every 2 years (Moore’s Law)

In orange, post-2008, doubles every 2 years and 9 months

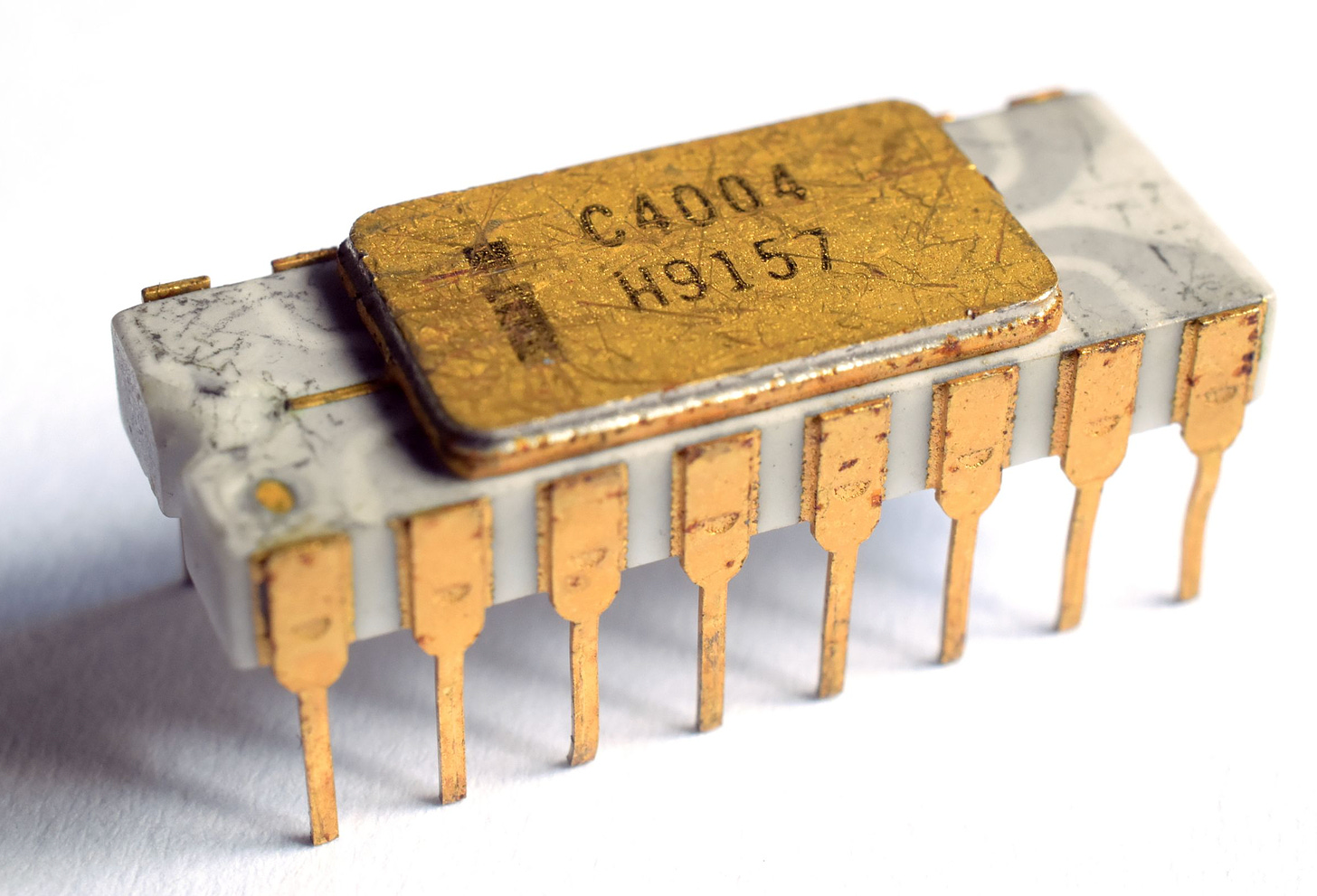

As noted, this slow down has already been seen both in computer memory, and in the development of GPUs. Before we make a projection for the Death of Moore’s Law, below I provide a picture for your amusement that marked the Birth of Moore’s Law:

Mapping the Logistic Curve to Moore’s Law

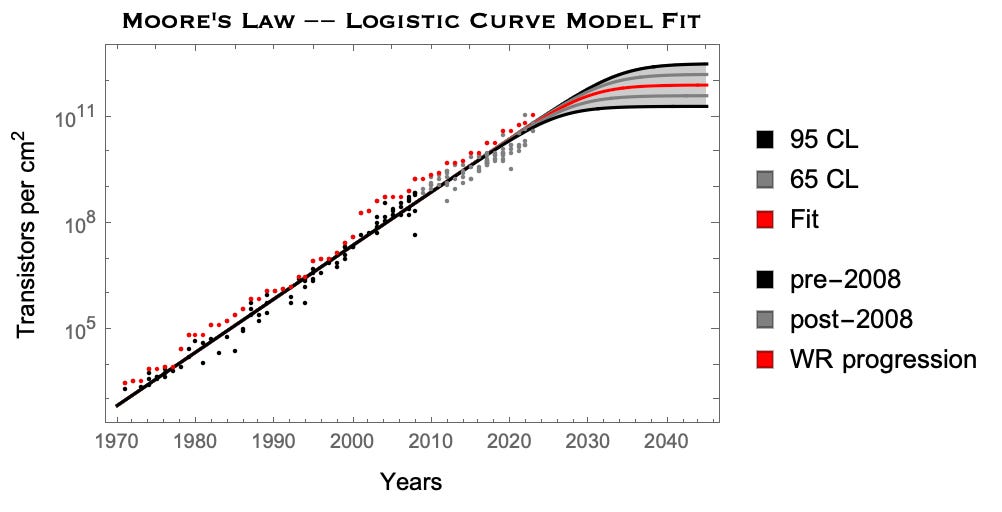

Anyone can draw a line - but here at Math Meets Money we can draw some logistic/S-curves (on logarithmic scales, of course)! Let’s give it a try and see where and when we should expect Moore’s Law to finally end.

We’ve already uncovered the historical doubling rate - every 2 years. From this we can infer that the logistic curve of Moore’s Law should have k = 1/2.

But what about the carrying capacity?

Well now we have a one parameter model that we can trivially fit analytically with a regression — doing so we find the following projection for Moore’s Law (bounded by 95% confidence levels):

So based on this, it looks like the carrying capacity will be somewhere between 100 billion and 2 trillion transistors per sqcm.

We know the width of a silicon atom is about 2 Angstrom (which would set a physical limit on gate size), and the current world record for transistor density is held by Apple’s A17 Bionic which has over 19 billion transistors per sqcm — so this model projection makes some sense!

Computing Reborn

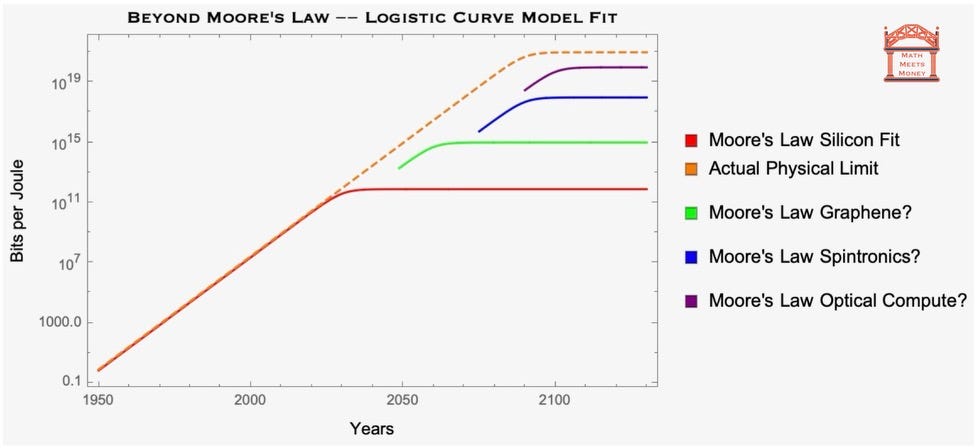

I’ll just close with some thoughts of what all this means for (1) the future of silicon based chips, (2) the commercial development of AI, and (3) the chip industry monopolies and Big Semi-Con.

The Future of Silicon

When making projections for scaling laws, we need to be careful not to extrapolate beyond the carrying capacity of the system.

Back in 1971, before the miniaturization of integrated circuits, making projections for infinite future returns based on Moore’s Law made some sense. Indeed, the founding members of Intel (including Moore) are all dead - so “doubling every two years” was in fact functionally infinite for them.

However, given what we know about the physics of silicon, we are rapidly approaching the limits of silicon based chips — so Moore’s law for silicon is quickly dying (along with its founder).

Sadly, we must always be honest with ourselves and take physics seriously.

On the plus side, while the current data seems to suggest the end of Moore’s Law for silicon based-chips, there’s no reason to believe that we are anywhere close to the absolutely limit of energy per bit. In fact, we know that the fundamental limit of (irreversible) computing is simply:

\(E_{\text{min}} (T)= k_B \,T \,\text{ln}(2) \approx 10^{-23} \,T\)

where T is the temperature of the computer. So at room temperature, 300 Kelvin, the absolute limit of bits processed per Joule of energy is about 10^21 bits!!!

To give you a sense of how crazy that is, I’ve plotted where we currently stand with silicon-based chips versus the of computing on a log scale below:

While this might seem ridiculously out of reach, especially considering how far silicon chips are going to fall short, I’ll just note that for heat engines our best engineering design (CCGTs) operates close to 80% the physical limit (known as Carnot efficiency).

So personally I think we should feel very capable of aiming high — even aspiring to reach 80% absolute limit of computing (i.e. 80 * 10^19 bits per Joule). The future is bright.

The Future of AI Scaling Laws

I’ve always been skeptical of the scaling laws for LLMs — I famously got in a rather heated debate about this very topic with a Hungarian in a Jacuzzi at a Colorado Ski Resort earlier this year. We already know that Moore’s law is likely to end sometime soon, and moreover, there’s no reason to believe that LLM performance will continue to plummet.

What I mean by this, is for every plot that looks like this:

I can build another one from data that looks like this:

Question: What will set the lower bound?

Answer: Whatever is the dimensionality of human language.

At some point, no matter how much data you have, you will eventually completely tap out the content of language as a system of symbolic representation. Even if we were to build an LLM that was more robust and intelligent than any human could every achieve themselves, we still might be limited in our ability to use it productively (as I noted this weekend).

Of all the opinions I have on this AI scaling business, the one that has guided my perspective the most is the following:

We need a better understanding of the data driven models that underly LLMs — one that is informed by physics and hardware rather than just aimlessly scaling data, compute and software.

To this end, I’m optimistic.

The Future of Big Semi-Con

There’s nothing necessarily wrong with an industry that has 5 major players that dominate the market, as Big Semi-Con does. And you have to applaud the Nvidia’s of the world — they’ve earned their market dominance.

There’s also nothing wrong with a healthy culture of M&A. When companies are in a symbiotic relationship with an equal or smaller player, which is common in the B2B ecosystem, vertically integrating a business through M&A can be a net positive for consumers and business owners.

But buying smaller companies to capture unearned customers and revenue can hurt the consumers and founders — and more importantly it hurts the rest of us as it disincentivizes innovators from continuing to innovate.

Moreover, acquisitions rarely lead to paradigm shifting leaps in innovation. There’s one thing I can guarantee: every small company that Big Semi-Con (Nvidia, AMD, Intel, etc.) eats up, will absolutely continue designing silicon-based hardware. And for every small company that disappears into the machine, we are potentially missing the opportunity to build out the next truly novel technology. In short:

Monopolies stagnate engineering solutions, which hinders progress towards the limits of physics.

To close, I’ll just leave you with a visual of what the world of computing could look like with a frothier market for game-changing innovation in engineering solutions:

While we might not get to the limits of computing within the century, if we allow free markets to work within a healthy regulatory framework, we could get there sooner than we expect — and that’s worth fighting for.

Thanks for reading. This is Math Meets Money.